目录

什么是框架

如何学习框架

什么是scrapy

scrapy的基本使用

环境安装

基本使用

什么是框架

集成了很多功能,具有很强的通用性的项目模板

如何学习框架

- 学习框架封装的功能的详细用法

- 深层,底层封装源码了解

什么是scrapy

- 爬虫中封装好的明星框架。

- 高性能的持久化存储、异步的数据下载、高性能的数据解析、分布式

scrapy的基本使用

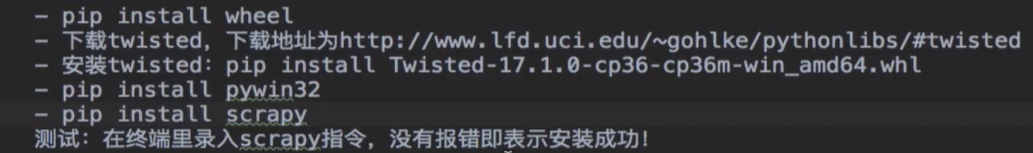

环境安装

Mac/Linux :

pip install scrapy

Windows:

pycharm环境下直接pip install scrapy帮助下载完需要的whl文件:

(venv) D:\pychram\spider>pip install scrapy

Collecting scrapy

Downloading Scrapy-2.3.0-py2.py3-none-any.whl (237 kB)

|████████████████████████████████| 237 kB 20 kB/s

Collecting protego>=0.1.15

Downloading Protego-0.1.16.tar.gz (3.2 MB)

|████████████████████████████████| 3.2 MB 8.9 kB/s

Collecting w3lib>=1.17.0

Downloading w3lib-1.22.0-py2.py3-none-any.whl (20 kB)

Collecting PyDispatcher>=2.0.5

Downloading PyDispatcher-2.0.5.tar.gz (34 kB)

Collecting Twisted>=17.9.0

Downloading Twisted-20.3.0-cp37-cp37m-win_amd64.whl (3.1 MB)

|████████████████████████████████| 3.1 MB 26 kB/s

Requirement already satisfied: lxml>=3.5.0 in d:\pychram\spider\venv\lib\site-packages (from scrapy) (4.5.2)

Collecting parsel>=1.5.0

Downloading parsel-1.6.0-py2.py3-none-any.whl (13 kB)

Collecting pyOpenSSL>=16.2.0

Downloading pyOpenSSL-19.1.0-py2.py3-none-any.whl (53 kB)

|████████████████████████████████| 53 kB 26 kB/s

Collecting cssselect>=0.9.1

Downloading cssselect-1.1.0-py2.py3-none-any.whl (16 kB)

Collecting queuelib>=1.4.2

Downloading queuelib-1.5.0-py2.py3-none-any.whl (13 kB)

Collecting zope.interface>=4.1.3

Downloading zope.interface-5.1.0-cp37-cp37m-win_amd64.whl (194 kB)

|████████████████████████████████| 194 kB 26 kB/s

Collecting cryptography>=2.0

Downloading cryptography-3.0-cp37-cp37m-win_amd64.whl (1.5 MB)

|████████████████████████████████| 1.5 MB 18 kB/s

Collecting itemloaders>=1.0.1

Downloading itemloaders-1.0.2-py3-none-any.whl (11 kB)

Collecting service-identity>=16.0.0

Downloading service_identity-18.1.0-py2.py3-none-any.whl (11 kB)

Collecting itemadapter>=0.1.0

Downloading itemadapter-0.1.0-py3-none-any.whl (7.0 kB)

Collecting six

Using cached six-1.15.0-py2.py3-none-any.whl (10 kB)

Requirement already satisfied: attrs>=19.2.0 in d:\pychram\spider\venv\lib\site-packages (from Twisted>=17.9.0->scrapy) (19.3.0)

Collecting constantly>=15.1

Downloading constantly-15.1.0-py2.py3-none-any.whl (7.9 kB)

Collecting Automat>=0.3.0

Downloading Automat-20.2.0-py2.py3-none-any.whl (31 kB)

Collecting PyHamcrest!=1.10.0,>=1.9.0

Downloading PyHamcrest-2.0.2-py3-none-any.whl (52 kB)

|████████████████████████████████| 52 kB 28 kB/s

Collecting hyperlink>=17.1.1

Downloading hyperlink-20.0.1-py2.py3-none-any.whl (48 kB)

|████████████████████████████████| 48 kB 36 kB/s

Collecting incremental>=16.10.1

Downloading incremental-17.5.0-py2.py3-none-any.whl (16 kB)

Requirement already satisfied: setuptools in d:\pychram\spider\venv\lib\site-packages (from zope.interface>=4.1.3->scrapy) (47.3.1)

Requirement already satisfied: cffi!=1.11.3,>=1.8 in d:\pychram\spider\venv\lib\site-packages (from cryptography>=2.0->scrapy) (1.14.0)

Collecting jmespath>=0.9.5

Downloading jmespath-0.10.0-py2.py3-none-any.whl (24 kB)

Collecting pyasn1

Downloading pyasn1-0.4.8-py2.py3-none-any.whl (77 kB)

|████████████████████████████████| 77 kB 31 kB/s

Collecting pyasn1-modules

Downloading pyasn1_modules-0.2.8-py2.py3-none-any.whl (155 kB)

|████████████████████████████████| 155 kB 13 kB/s

Requirement already satisfied: idna>=2.5 in d:\pychram\spider\venv\lib\site-packages (from hyperlink>=17.1.1->Twisted>=17.9.0->scrapy) (2.10)

Requirement already satisfied: pycparser in d:\pychram\spider\venv\lib\site-packages (from cffi!=1.11.3,>=1.8->cryptography>=2.0->scrapy) (2.20)

Building wheels for collected packages: protego, PyDispatcher

Building wheel for protego (setup.py) ... done

Created wheel for protego: filename=Protego-0.1.16-py3-none-any.whl size=7769 sha256=2cbf8d0ea70c25086daef895e28b8c6bd052d72dcbecb18dc

Stored in directory: c:\users\inspur\appdata\local\pip\cache\wheels\ca\44\01\3592ccfbcfaee4ab297c4097e6e9dbe1c7697e3531a39877ab

Building wheel for PyDispatcher (setup.py) ... done

Created wheel for PyDispatcher: filename=PyDispatcher-2.0.5-py3-none-any.whl size=12552 sha256=b8a36f20c079dabe458ecddd530daeb0506a376f8de22f17714f

0d811b

Stored in directory: c:\users\inspur\appdata\local\pip\cache\wheels\dc\d0\bf\0cc715c01fce0bace63b46283acf5cc630d5e5dbb4602c54e5

Successfully built protego PyDispatcher

Installing collected packages: six, protego, w3lib, PyDispatcher, constantly, Automat, zope.interface, PyHamcrest, hyperlink, incremental, Twisted, csssele

ct, parsel, cryptography, pyOpenSSL, queuelib, itemadapter, jmespath, itemloaders, pyasn1, pyasn1-modules, service-identity, scrapy

Successfully installed Automat-20.2.0 PyDispatcher-2.0.5 PyHamcrest-2.0.2 Twisted-20.3.0 constantly-15.1.0 cryptography-3.0 cssselect-1.1.0 hyperlink-20.0.

1 incremental-17.5.0 itemadapter-0.1.0 itemloaders-1.0.2 jmespath-0.10.0 parsel-1.6.0 protego-0.1.16 pyOpenSSL-19.1.0 pyasn1-0.4.8 pyasn1-modules-0.2.8 que

uelib-1.5.0 scrapy-2.3.0 service-identity-18.1.0 six-1.15.0 w3lib-1.22.0 zope.interface-5.1.0

基本使用

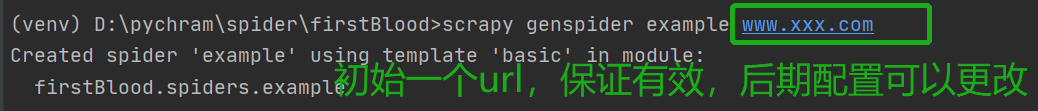

- 创建一个工程:scrapy startproject +文件名。如:scrapy startproject firstBlood

- cd firstBlood

- 创建一个爬虫文件scrapy genspider example example.com

example文件打开,如下:在此文件中写爬虫代码

控制台语句和结果 :

- 执行工程: scrapy crawl +爬虫文件名称

版权声明:本文内容由互联网用户自发贡献,该文观点仅代表作者本人。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如发现本站有涉嫌侵权/违法违规的内容,请联系我们,一经查实,本站将立刻删除。

如需转载请保留出处:https://51itzy.com/kjqy/50036.html