Cyclical Stochastic Gradient MCMC for Bayesian Deep Learning

This repository contains code for the paper

Cyclical Stochastic Gradient MCMC for Bayesian Deep Learning, accepted in International Conference on Learning Representations (ICLR), 2020 as Oral Presentation (acceptance rate = 1.85%).

介绍

Cyclical Stochastic Gradient MCMC (cSG-MCMC) 提出有效探索复杂的多模态分布,例如现代深度神经网络遇到的那些。

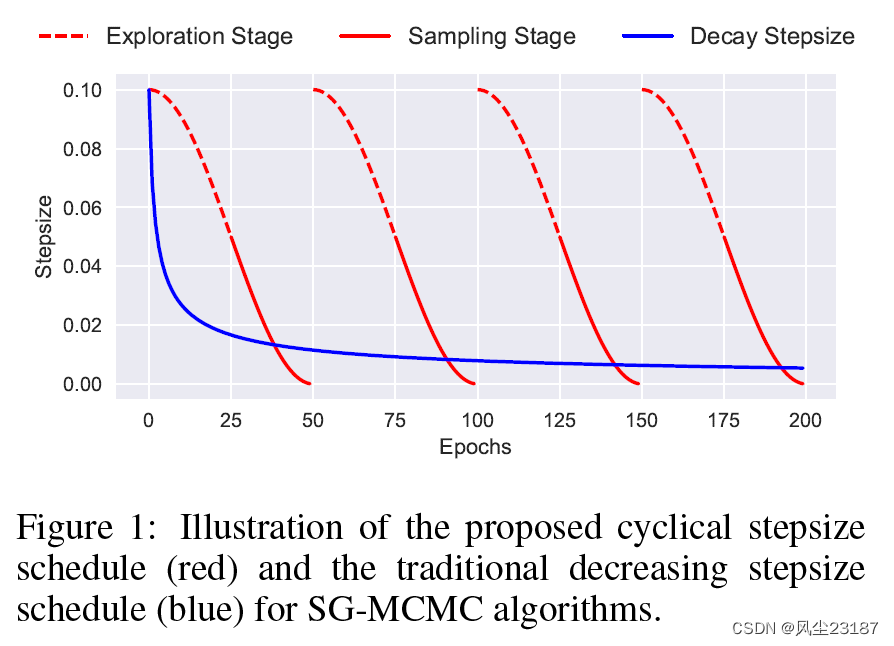

关键思想是采用循环步长计划,其中较大的步长发现新模式,而较小的步长表征每个模式。 我们证明,我们提出的学习率计划比具有标准衰减计划的 SG-MCMC 提供更快的收敛到来自平稳分布的样本。 下图说明了 SG-MCMC 算法提出的循环步长调度(红色)和传统的递减步长调度(蓝色)。 cSG-MCMC 由两个阶段组成:探索和采样。

环境(win系统无法运行,虚拟机上无法使用cuda)

- Python 2.7

- PyTorch 1.2.0

- torchvision 0.4.0

实验结论

Test Error (%) on CIFAR-10 and CIFAR-100.

| Dataset | SGLD | cSGLD | SGHMC | cSGHMC |

|---|---|---|---|---|

| CIFAR-10 | 5.20 ± 0.06 | 4.29 ± 0.06 | 4.93 ± 0.1 | 4.27 ± 0.03 |

| CIFAR-100 | 23.23 ± 0.01 | 20.55 ± 0.06 | 22.60 ± 0.17 | 20.50 ± 0.11 |

代码分析——csgmcmc,csghmc

1.导入库和参数

'''Train CIFAR10 with PyTorch.''' from __future__ import print_function import sys sys.path.append('..') import torch import torch.nn as nn import torch.optim as optim import torch.nn.functional as F import torch.backends.cudnn as cudnn import torchvision import torchvision.transforms as transforms import os import argparse from models import * from torch.autograd import Variable import numpy as np import random parser = argparse.ArgumentParser(description='cSG-MCMC CIFAR10 Training') parser.add_argument('--dir', type=str, default='/home/mpdeep/PycharmProjects/deepMC/csgmcmc/experiments/model', help='path to save checkpoints (default: None)') parser.add_argument('--data_path', type=str, default='/home/mpdeep/deepMCMC/csgmcmc/experiments/data/cifar-10-batches-py', metavar='PATH', help='path to datasets location (default: None)') parser.add_argument('--epochs', type=int, default=200, help='number of epochs to train (default: 10)') parser.add_argument('--batch-size', type=int, default=64, metavar='N', help='input batch size for training (default: 64)') parser.add_argument('--alpha', type=int, default=1, help='1: SGLD') parser.add_argument('--device_id',type = int, help = 'device id to use') parser.add_argument('--seed', type=int, default=1, help='random seed') parser.add_argument('--temperature', type=float, default=1./50000, help='temperature (default: 1/dataset_size)') args = parser.parse_args() device_id = args.device_id use_cuda = torch.cuda.is_available() torch.manual_seed(args.seed) np.random.seed(args.seed) random.seed(args.seed) 2.数据准备

讯享网# Data print('==> Preparing data..') transform_train = transforms.Compose([ transforms.RandomCrop(32, padding=4), transforms.RandomHorizontalFlip(), transforms.ToTensor(), transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)), ]) transform_test = transforms.Compose([ transforms.ToTensor(), transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)), ]) trainset = torchvision.datasets.CIFAR10(root=args.data_path, train=True, download=False, transform=transform_train) trainloader = torch.utils.data.DataLoader(trainset, batch_size=args.batch_size, shuffle=True, num_workers=0) testset = torchvision.datasets.CIFAR10(root=args.data_path, train=False, download=True, transform=transform_test) testloader = torch.utils.data.DataLoader(testset, batch_size=100, shuffle=False, num_workers=0)

3. 模型实例化

# Model print('==> Building model..') net = ResNet18() 其中函数细节如下两块:

讯享网def ResNet18(num_classes=10): return ResNet(BasicBlock, [2,2,2,2],num_classes=num_classes)

class ResNet(nn.Module): def __init__(self, block, num_blocks, num_classes=10): super(ResNet, self).__init__() self.in_planes = 64 self.conv1 = nn.Conv2d(3, 64, kernel_size=3, stride=1, padding=1, bias=False) self.bn1 = nn.BatchNorm2d(64) self.layer1 = self._make_layer(block, 64, num_blocks[0], stride=1) self.layer2 = self._make_layer(block, 128, num_blocks[1], stride=2) self.layer3 = self._make_layer(block, 256, num_blocks[2], stride=2) self.layer4 = self._make_layer(block, 512, num_blocks[3], stride=2) self.linear = nn.Linear(512*block.expansion, num_classes) def _make_layer(self, block, planes, num_blocks, stride): strides = [stride] + [1]*(num_blocks-1) layers = [] for stride in strides: layers.append(block(self.in_planes, planes, stride)) self.in_planes = planes * block.expansion return nn.Sequential(*layers) def forward(self, x): out = F.relu(self.bn1(self.conv1(x))) out = self.layer1(out) out = self.layer2(out) out = self.layer3(out) out = self.layer4(out) out = F.avg_pool2d(out, 4) out = out.view(out.size(0), -1) out = self.linear(out) return out 4.是否使用GPU

if use_cuda: net.cuda(device_id) cudnn.benchmark = True cudnn.deterministic = True 5.设置

- 损失函数

- 优化器

- 其他

datasize = 50000 num_batch = datasize/args.batch_size+1 lr_0 = 0.5 # initial lr M = 4 # number of cycles T = args.epochs*num_batch # total number of iterations criterion = nn.CrossEntropyLoss() optimizer = optim.SGD(net.parameters(), lr=lr_0, momentum=1-args.alpha, weight_decay=5e-4) mt = 0 6. 训练过程定义(二选一)

6.1 csgmcmc 训练过程定义

def noise_loss(lr,alpha): noise_loss = 0.0 noise_std = (2/lr*alpha)0.5 for var in net.parameters(): means = torch.zeros(var.size()).cuda(device_id) noise_loss += torch.sum(var * torch.normal(means, std = noise_std).cuda(device_id)) return noise_loss def adjust_learning_rate(optimizer, epoch, batch_idx): rcounter = epoch*num_batch+batch_idx cos_inner = np.pi * (rcounter % (T // M)) cos_inner /= T // M cos_out = np.cos(cos_inner) + 1 lr = 0.5*cos_out*lr_0 for param_group in optimizer.param_groups: param_group['lr'] = lr return lr def train(epoch): print('\nEpoch: %d' % epoch) # 设置网络状态为训练模式 net.train() train_loss = 0 correct = 0 total = 0 for batch_idx, (inputs, targets) in enumerate(trainloader): if use_cuda: inputs, targets = inputs.cuda(device_id), targets.cuda(device_id) optimizer.zero_grad() lr = adjust_learning_rate(optimizer, epoch,batch_idx) outputs = net(inputs) if (epoch%50)+1>45: loss_noise = noise_loss(lr,args.alpha)*(args.temperature/datasize).5 loss = criterion(outputs, targets)+loss_noise else: loss = criterion(outputs, targets) loss.backward() optimizer.step() train_loss += loss.data.item() _, predicted = torch.max(outputs.data, 1) total += targets.size(0) correct += predicted.eq(targets.data).cpu().sum() if batch_idx%100==0: print('Loss: %.3f | Acc: %.3f%% (%d/%d)' % (train_loss/(batch_idx+1), 100.*correct.item()/total, correct, total)) 6.2 csghmc 训练过程定义

def adjust_learning_rate(epoch, batch_idx): rcounter = epoch*num_batch+batch_idx cos_inner = np.pi * (rcounter % (T // M)) cos_inner /= T // M cos_out = np.cos(cos_inner) + 1 lr = 0.5*cos_out*lr_0 return lr def update_params(lr, epoch): for p in net.parameters(): #print(net.linear.weight[0, 0]) if not hasattr(p,'buf'): p.buf = torch.zeros(p.size())#.cuda(device_id) d_p = p.grad.data d_p.add_(weight_decay, p.data) buf_new = (1-args.alpha)*p.buf - lr*d_p if (epoch%50)+1>45: eps = torch.randn(p.size())#.cuda(device_id) buf_new += (2.0*lr*args.alpha*args.temperature/datasize).5*eps p.data.add_(buf_new) p.buf = buf_new def train(epoch): print('\nEpoch: %d' % epoch) net.train() train_loss = 0 correct = 0 total = 0 for batch_idx, (inputs, targets) in enumerate(trainloader): if use_cuda: inputs, targets = inputs.cuda(device_id), targets.cuda(device_id) net.zero_grad() lr = adjust_learning_rate(epoch,batch_idx) outputs = net(inputs) loss = criterion(outputs, targets) loss.backward() update_params(lr,epoch) train_loss += loss.data.item() _, predicted = torch.max(outputs.data, 1) total += targets.size(0) correct += predicted.eq(targets.data).cpu().sum() if batch_idx%100==0: print('Loss: %.3f | Acc: %.3f%% (%d/%d)' % (train_loss/(batch_idx+1), 100.*correct.item()/total, correct, total)) 7.测试过程定义

def test(epoch): global best_acc net.eval() test_loss = 0 correct = 0 total = 0 with torch.no_grad(): for batch_idx, (inputs, targets) in enumerate(testloader): if use_cuda: inputs, targets = inputs.cuda(device_id), targets.cuda(device_id) outputs = net(inputs) loss = criterion(outputs, targets) test_loss += loss.data.item() _, predicted = torch.max(outputs.data, 1) total += targets.size(0) correct += predicted.eq(targets.data).cpu().sum() if batch_idx%100==0: print('Test Loss: %.3f | Test Acc: %.3f%% (%d/%d)' % (test_loss/(batch_idx+1), 100.*correct.item()/total, correct, total)) print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.2f}%)\n'.format( test_loss/len(testloader), correct, total, 100. * correct.item() / total)) 8.开始训练

for epoch in range(args.epochs): train(epoch) test(epoch) if (epoch % 50)+1 > 47: # save 3 models per cycle print('save!') net.cpu() torch.save(net.state_dict(), args.dir + '/cifar_model_%i.pt' % (mt)) mt += 1 net.cuda(device_id) 9.训练结果

9.1 csgmcmc(时间原因没跑完)

/home/mpdeep/anaconda3/envs/python27/bin/python /home/mpdeep/PycharmProjects/deepMC/csgmcmc/experiments/cifar_csgmcmc.py 'import sitecustomize' failed; use -v for traceback ==> Preparing data.. Files already downloaded and verified ==> Building model.. Epoch: 0 Loss: 2.358 | Acc: 12.500% (8/64) Loss: 3.011 | Acc: 16.847% (1089/6464) Loss: 2.500 | Acc: 20.818% (2678/12864) Loss: 2.288 | Acc: 23.884% (4601/19264) Loss: 2.157 | Acc: 26.543% (6812/25664) Loss: 2.065 | Acc: 28.580% (9164/32064) Loss: 1.998 | Acc: 30.246% (11634/38464) Loss: 1.940 | Acc: 31.798% (14266/44864) Test Loss: 2.473 | Test Acc: 41.000% (41/100) Test set: Average loss: 2.7806, Accuracy: 2927/10000 (29.27%) Epoch: 1 Loss: 1.934 | Acc: 31.250% (20/64) Loss: 1.536 | Acc: 43.487% (2811/6464) Loss: 1.510 | Acc: 44.302% (5699/12864) Loss: 1.496 | Acc: 44.928% (8655/19264) Loss: 1.482 | Acc: 45.472% (11670/25664) Loss: 1.466 | Acc: 46.052% (14766/32064) Loss: 1.450 | Acc: 46.703% (17964/38464) Loss: 1.430 | Acc: 47.568% (21341/44864) Test Loss: 1.768 | Test Acc: 46.000% (46/100) Test set: Average loss: 1.6926, Accuracy: 4486/10000 (44.86%) Epoch: 2 Loss: 1.234 | Acc: 60.938% (39/64) Loss: 1.258 | Acc: 54.162% (3501/6464) Loss: 1.259 | Acc: 54.742% (7042/12864) Loss: 1.235 | Acc: 55.612% (10713/19264) Loss: 1.214 | Acc: 56.382% (14470/25664) Loss: 1.198 | Acc: 57.095% (18307/32064) Loss: 1.186 | Acc: 57.602% (22156/38464) Loss: 1.175 | Acc: 57.993% (26018/44864) Test Loss: 1.273 | Test Acc: 57.000% (57/100) Test set: Average loss: 1.3704, Accuracy: 5369/10000 (53.69%) Epoch: 3 Loss: 1.235 | Acc: 51.562% (33/64) Loss: 1.045 | Acc: 62.191% (4020/6464) Loss: 1.044 | Acc: 62.725% (8069/12864) Loss: 1.030 | Acc: 63.279% (12190/19264) Loss: 1.016 | Acc: 63.837% (16383/25664) Loss: 1.003 | Acc: 64.271% (20608/32064) Loss: 0.991 | Acc: 64.595% (24846/38464) Loss: 0.979 | Acc: 65.152% (29230/44864) Test Loss: 1.391 | Test Acc: 54.000% (54/100) Test set: Average loss: 1.2947, Accuracy: 5832/10000 (58.32%) Epoch: 4 Loss: 1.136 | Acc: 68.750% (44/64) Loss: 0.865 | Acc: 69.957% (4522/6464) Loss: 0.860 | Acc: 70.157% (9025/12864) Loss: 0.852 | Acc: 70.172% (13518/19264) Loss: 0.842 | Acc: 70.457% (18082/25664) Loss: 0.833 | Acc: 70.780% (22695/32064) Loss: 0.823 | Acc: 71.215% (27392/38464) Loss: 0.814 | Acc: 71.512% (32083/44864) Test Loss: 2.223 | Test Acc: 46.000% (46/100) Test set: Average loss: 2.1270, Accuracy: 4638/10000 (46.38%) Epoch: 5 Loss: 1.826 | Acc: 48.438% (31/64) Loss: 0.737 | Acc: 74.103% (4790/6464) Loss: 0.722 | Acc: 74.736% (9614/12864) Loss: 0.711 | Acc: 75.099% (14467/19264) Loss: 0.710 | Acc: 75.207% (19301/25664) Loss: 0.704 | Acc: 75.462% (24196/32064) Loss: 0.698 | Acc: 75.624% (29088/38464) Loss: 0.695 | Acc: 75.753% (33986/44864) Test Loss: 1.090 | Test Acc: 64.000% (64/100) Test set: Average loss: 1.2203, Accuracy: 6296/10000 (62.96%) Epoch: 6 Loss: 0.664 | Acc: 79.688% (51/64) Loss: 0.633 | Acc: 77.785% (5028/6464) Loss: 0.633 | Acc: 77.760% (10003/12864) Loss: 0.628 | Acc: 78.016% (15029/19264) Loss: 0.626 | Acc: 78.129% (20051/25664) Loss: 0.625 | Acc: 78.250% (25090/32064) Loss: 0.622 | Acc: 78.291% (30114/38464) Loss: 0.622 | Acc: 78.439% (35191/44864) Test Loss: 1.080 | Test Acc: 66.000% (66/100) Test set: Average loss: 1.1048, Accuracy: 6472/10000 (64.72%) Epoch: 7 Loss: 0.668 | Acc: 78.125% (50/64) Loss: 0.571 | Acc: 80.028% (5173/6464) Loss: 0.582 | Acc: 79.447% (10220/12864) Loss: 0.577 | Acc: 79.833% (15379/19264) Loss: 0.577 | Acc: 80.011% (20534/25664) Loss: 0.573 | Acc: 80.052% (25668/32064) Loss: 0.575 | Acc: 80.044% (30788/38464) Loss: 0.574 | Acc: 80.064% (35920/44864) Test Loss: 0.731 | Test Acc: 73.000% (73/100) Test set: Average loss: 0.7476, Accuracy: 7533/10000 (75.33%) Epoch: 8 Loss: 0.648 | Acc: 81.250% (52/64) Loss: 0.556 | Acc: 80.832% (5225/6464) Loss: 0.543 | Acc: 81.312% (10460/12864) Loss: 0.536 | Acc: 81.691% (15737/19264) Loss: 0.534 | Acc: 81.710% (20970/25664) Loss: 0.533 | Acc: 81.755% (26214/32064) Loss: 0.536 | Acc: 81.637% (31401/38464) Loss: 0.536 | Acc: 81.653% (36633/44864) Test Loss: 0.824 | Test Acc: 69.000% (69/100) Test set: Average loss: 0.8075, Accuracy: 7307/10000 (73.07%) Epoch: 9 Loss: 0.670 | Acc: 79.688% (51/64) Loss: 0.503 | Acc: 82.967% (5363/6464) Loss: 0.508 | Acc: 82.812% (10653/12864) Loss: 0.513 | Acc: 82.652% (15922/19264) Loss: 0.511 | Acc: 82.688% (21221/25664) Loss: 0.509 | Acc: 82.710% (26520/32064) Loss: 0.511 | Acc: 82.714% (31815/38464) Loss: 0.508 | Acc: 82.792% (37144/44864) Test Loss: 1.889 | Test Acc: 50.000% (50/100) Test set: Average loss: 1.7662, Accuracy: 5010/10000 (50.10%) Epoch: 10 Loss: 0.759 | Acc: 81.250% (52/64) Loss: 0.486 | Acc: 83.277% (5383/6464) Loss: 0.483 | Acc: 83.294% (10715/12864) Loss: 0.489 | Acc: 83.243% (16036/19264) Loss: 0.488 | Acc: 83.132% (21335/25664) Loss: 0.484 | Acc: 83.358% (26728/32064) Loss: 0.484 | Acc: 83.403% (32080/38464) Loss: 0.482 | Acc: 83.457% (37442/44864) Test Loss: 1.741 | Test Acc: 56.000% (56/100) Test set: Average loss: 1.6062, Accuracy: 6349/10000 (63.49%) Epoch: 11 Loss: 0.732 | Acc: 78.125% (50/64) Loss: 0.458 | Acc: 84.236% (5445/6464) Loss: 0.466 | Acc: 84.033% (10810/12864) Loss: 0.464 | Acc: 84.121% (16205/19264) Loss: 0.462 | Acc: 84.176% (21603/25664) Loss: 0.464 | Acc: 84.019% (26940/32064) Loss: 0.465 | Acc: 84.063% (32334/38464) Loss: 0.464 | Acc: 84.096% (37729/44864) Test Loss: 0.549 | Test Acc: 81.000% (81/100) Test set: Average loss: 0.6446, Accuracy: 7943/10000 (79.43%) Epoch: 12 Loss: 0.532 | Acc: 81.250% (52/64) Loss: 0.433 | Acc: 85.102% (5501/6464) Loss: 0.440 | Acc: 84.950% (10928/12864) Loss: 0.439 | Acc: 85.060% (16386/19264) Loss: 0.443 | Acc: 84.874% (21782/25664) Loss: 0.445 | Acc: 84.752% (27175/32064) Loss: 0.444 | Acc: 84.768% (32605/38464) Loss: 0.443 | Acc: 84.783% (38037/44864) Test Loss: 0.782 | Test Acc: 77.000% (77/100) Test set: Average loss: 0.8711, Accuracy: 7233/10000 (72.33%) Epoch: 13 Loss: 0.709 | Acc: 70.312% (45/64) Loss: 0.435 | Acc: 85.056% (5498/6464) Loss: 0.424 | Acc: 85.650% (11018/12864) Loss: 0.428 | Acc: 85.486% (16468/19264) Loss: 0.428 | Acc: 85.478% (21937/25664) Loss: 0.424 | Acc: 85.569% (27437/32064) Loss: 0.424 | Acc: 85.566% (32912/38464) Loss: 0.423 | Acc: 85.521% (38368/44864) Test Loss: 1.228 | Test Acc: 62.000% (62/100) Test set: Average loss: 1.4019, Accuracy: 6317/10000 (63.17%) Epoch: 14 Loss: 0.650 | Acc: 79.688% (51/64) Loss: 0.424 | Acc: 85.675% (5538/6464) Loss: 0.417 | Acc: 85.510% (11000/12864) Loss: 0.418 | Acc: 85.387% (16449/19264) Loss: 0.417 | Acc: 85.513% (21946/25664) Loss: 0.410 | Acc: 85.800% (27511/32064) Loss: 0.412 | Acc: 85.795% (33000/38464) Loss: 0.412 | Acc: 85.755% (38473/44864) Test Loss: 3.320 | Test Acc: 40.000% (40/100) Test set: Average loss: 3.4038, Accuracy: 3627/10000 (36.27%) Epoch: 15 Loss: 0.974 | Acc: 65.625% (42/64) Loss: 0.404 | Acc: 86.278% (5577/6464) Loss: 0.396 | Acc: 86.381% (11112/12864) Loss: 0.397 | Acc: 86.425% (16649/19264) Loss: 0.388 | Acc: 86.767% (22268/25664) Loss: 0.389 | Acc: 86.736% (27811/32064) Loss: 0.391 | Acc: 86.577% (33301/38464) Loss: 0.391 | Acc: 86.606% (38855/44864) Test Loss: 0.747 | Test Acc: 78.000% (78/100) Test set: Average loss: 0.7592, Accuracy: 7626/10000 (76.26%) Epoch: 16 Loss: 0.452 | Acc: 81.250% (52/64) Loss: 0.373 | Acc: 87.345% (5646/6464) Loss: 0.372 | Acc: 87.422% (11246/12864) Loss: 0.368 | Acc: 87.469% (16850/19264) Loss: 0.374 | Acc: 87.301% (22405/25664) Loss: 0.375 | Acc: 87.282% (27986/32064) Loss: 0.377 | Acc: 87.162% (33526/38464) Loss: 0.379 | Acc: 87.012% (39037/44864) Test Loss: 1.432 | Test Acc: 60.000% (60/100) Test set: Average loss: 1.6357, Accuracy: 5992/10000 (59.92%) Epoch: 17 Loss: 0.485 | Acc: 82.812% (53/64) Loss: 0.347 | Acc: 87.995% (5688/6464) Loss: 0.364 | Acc: 87.422% (11246/12864) Loss: 0.364 | Acc: 87.536% (16863/19264) Loss: 0.361 | Acc: 87.695% (22506/25664) Loss: 0.359 | Acc: 87.771% (28143/32064) Loss: 0.361 | Acc: 87.698% (33732/38464) Loss: 0.362 | Acc: 87.652% (39324/44864) Test Loss: 0.846 | Test Acc: 78.000% (78/100) Test set: Average loss: 0.6901, Accuracy: 7920/10000 (79.20%) Epoch: 18 Loss: 0.596 | Acc: 82.812% (53/64) Loss: 0.343 | Acc: 88.397% (5714/6464) Loss: 0.333 | Acc: 88.814% (11425/12864) Loss: 0.337 | Acc: 88.497% (17048/19264) Loss: 0.340 | Acc: 88.412% (22690/25664) Loss: 0.341 | Acc: 88.370% (28335/32064) Loss: 0.344 | Acc: 88.303% (33965/38464) Loss: 0.345 | Acc: 88.207% (39573/44864) Test Loss: 0.476 | Test Acc: 86.000% (86/100) Test set: Average loss: 0.5138, Accuracy: 8373/10000 (83.73%) Epoch: 19 Loss: 0.246 | Acc: 93.750% (60/64) Loss: 0.329 | Acc: 88.614% (5728/6464) Loss: 0.327 | Acc: 88.666% (11406/12864) Loss: 0.326 | Acc: 88.844% (17115/19264) Loss: 0.329 | Acc: 88.622% (22744/25664) Loss: 0.332 | Acc: 88.563% (28397/32064) Loss: 0.333 | Acc: 88.524% (34050/38464) Loss: 0.334 | Acc: 88.514% (39711/44864) Test Loss: 0.548 | Test Acc: 81.000% (81/100) Test set: Average loss: 0.5267, Accuracy: 8295/10000 (82.95%) Epoch: 20 Loss: 0.302 | Acc: 90.625% (58/64) Loss: 0.311 | Acc: 89.341% (5775/6464) Loss: 0.314 | Acc: 89.117% (11464/12864) Loss: 0.321 | Acc: 88.891% (17124/19264) Loss: 0.321 | Acc: 89.000% (22841/25664) Loss: 0.321 | Acc: 89.025% (28545/32064) Loss: 0.323 | Acc: 88.995% (34231/38464) Loss: 0.322 | Acc: 89.011% (39934/44864) Test Loss: 1.747 | Test Acc: 55.000% (55/100) Test set: Average loss: 1.5880, Accuracy: 5479/10000 (54.79%) Epoch: 21 Loss: 0.737 | Acc: 75.000% (48/64) Loss: 0.322 | Acc: 89.047% (5756/6464) Loss: 0.317 | Acc: 89.272% (11484/12864) Loss: 0.307 | Acc: 89.556% (17252/19264) Loss: 0.310 | Acc: 89.429% (22951/25664) Loss: 0.307 | Acc: 89.558% (28716/32064) Loss: 0.308 | Acc: 89.411% (34391/38464) Loss: 0.307 | Acc: 89.410% (40113/44864) Test Loss: 2.520 | Test Acc: 50.000% (50/100) Test set: Average loss: 2.2063, Accuracy: 5276/10000 (52.76%) Epoch: 22 Loss: 0.697 | Acc: 78.125% (50/64) Loss: 0.287 | Acc: 90.718% (5864/6464) Loss: 0.291 | Acc: 90.221% (11606/12864) Loss: 0.293 | Acc: 90.085% (17354/19264) Loss: 0.295 | Acc: 89.924% (23078/25664) Loss: 0.296 | Acc: 89.936% (28837/32064) Loss: 0.296 | Acc: 89.965% (34604/38464) Loss: 0.296 | Acc: 89.921% (40342/44864) Test Loss: 1.003 | Test Acc: 73.000% (73/100) Test set: Average loss: 1.0247, Accuracy: 7095/10000 (70.95%) Epoch: 23 Loss: 0.346 | Acc: 85.938% (55/64) Loss: 0.251 | Acc: 91.337% (5904/6464) Loss: 0.264 | Acc: 90.967% (11702/12864) Loss: 0.264 | Acc: 90.968% (17524/19264) Loss: 0.270 | Acc: 90.738% (23287/25664) Loss: 0.274 | Acc: 90.641% (29063/32064) Loss: 0.273 | Acc: 90.721% (34895/38464) Loss: 0.277 | Acc: 90.623% (40657/44864) Test Loss: 1.066 | Test Acc: 68.000% (68/100) Test set: Average loss: 1.0550, Accuracy: 6955/10000 (69.55%) Epoch: 24 Loss: 0.413 | Acc: 87.500% (56/64) Loss: 0.261 | Acc: 91.306% (5902/6464) Loss: 0.268 | Acc: 90.920% (11696/12864) Loss: 0.268 | Acc: 90.833% (17498/19264) Loss: 0.268 | Acc: 90.777% (23297/25664) Loss: 0.269 | Acc: 90.747% (29097/32064) Loss: 0.271 | Acc: 90.638% (34863/38464) Loss: 0.270 | Acc: 90.676% (40681/44864) Test Loss: 0.651 | Test Acc: 84.000% (84/100) Test set: Average loss: 0.6353, Accuracy: 8073/10000 (80.73%) Epoch: 25 Loss: 0.349 | Acc: 90.625% (58/64) Loss: 0.249 | Acc: 91.383% (5907/6464) Loss: 0.260 | Acc: 91.123% (11722/12864) Loss: 0.258 | Acc: 91.289% (17586/19264) Loss: 0.251 | Acc: 91.463% (23473/25664) Loss: 0.251 | Acc: 91.480% (29332/32064) Loss: 0.253 | Acc: 91.397% (35155/38464) Loss: 0.255 | Acc: 91.271% (40948/44864) Test Loss: 4.117 | Test Acc: 43.000% (43/100) Test set: Average loss: 3.6455, Accuracy: 4458/10000 (44.58%) Epoch: 26 Loss: 0.564 | Acc: 84.375% (54/64) Loss: 0.259 | Acc: 90.950% (5879/6464) Loss: 0.239 | Acc: 91.698% (11796/12864) Loss: 0.238 | Acc: 91.798% (17684/19264) Loss: 0.240 | Acc: 91.681% (23529/25664) Loss: 0.243 | Acc: 91.595% (29369/32064) Loss: 0.242 | Acc: 91.660% (35256/38464) Loss: 0.241 | Acc: 91.688% (41135/44864) Test Loss: 0.628 | Test Acc: 84.000% (84/100) Test set: Average loss: 0.7000, Accuracy: 7898/10000 (78.98%) Epoch: 27 Loss: 0.355 | Acc: 89.062% (57/64) Loss: 0.222 | Acc: 92.466% (5977/6464) Loss: 0.221 | Acc: 92.467% (11895/12864) Loss: 0.227 | Acc: 92.359% (17792/19264) Loss: 0.226 | Acc: 92.316% (23692/25664) Loss: 0.227 | Acc: 92.209% (29566/32064) Loss: 0.226 | Acc: 92.258% (35486/38464) Loss: 0.226 | Acc: 92.272% (41397/44864) Test Loss: 0.460 | Test Acc: 84.000% (84/100) Test set: Average loss: 0.5778, Accuracy: 8349/10000 (83.49%) Epoch: 28 Loss: 0.464 | Acc: 84.375% (54/64) Loss: 0.205 | Acc: 92.915% (6006/6464) Loss: 0.209 | Acc: 92.747% (11931/12864) Loss: 0.206 | Acc: 92.831% (17883/19264) Loss: 0.208 | Acc: 92.764% (23807/25664) Loss: 0.207 | Acc: 92.836% (29767/32064) Loss: 0.207 | Acc: 92.876% (35724/38464) Loss: 0.206 | Acc: 92.887% (41673/44864) Test Loss: 0.368 | Test Acc: 87.000% (87/100) Test set: Average loss: 0.4783, Accuracy: 8558/10000 (85.58%) Epoch: 29 Loss: 0.260 | Acc: 87.500% (56/64) Loss: 0.197 | Acc: 93.193% (6024/6464) Loss: 0.199 | Acc: 93.221% (11992/12864) Loss: 0.197 | Acc: 93.184% (17951/19264) Loss: 0.198 | Acc: 93.158% (23908/25664) Loss: 0.200 | Acc: 93.151% (29868/32064) Loss: 0.201 | Acc: 93.100% (35810/38464) Loss: 0.201 | Acc: 93.075% (41757/44864) Test Loss: 0.671 | Test Acc: 79.000% (79/100) Test set: Average loss: 0.6245, Accuracy: 8084/10000 (80.84%) Epoch: 30 Loss: 0.444 | Acc: 84.375% (54/64) Loss: 0.174 | Acc: 94.477% (6107/6464) Loss: 0.172 | Acc: 94.317% (12133/12864) Loss: 0.175 | Acc: 94.160% (18139/19264) Loss: 0.179 | Acc: 93.957% (24113/25664) Loss: 0.180 | Acc: 93.950% (30124/32064) Loss: 0.183 | Acc: 93.810% (36083/38464) Loss: 0.183 | Acc: 93.788% (42077/44864) Test Loss: 0.311 | Test Acc: 91.000% (91/100) Test set: Average loss: 0.2959, Accuracy: 9027/10000 (90.27%) Epoch: 31 Loss: 0.138 | Acc: 95.312% (61/64) Loss: 0.147 | Acc: 95.096% (6147/6464) Loss: 0.156 | Acc: 94.846% (12201/12864) Loss: 0.161 | Acc: 94.617% (18227/19264) Loss: 0.163 | Acc: 94.432% (24235/25664) Loss: 0.163 | Acc: 94.389% (30265/32064) Loss: 0.164 | Acc: 94.348% (36290/38464) Loss: 0.167 | Acc: 94.280% (42298/44864) Test Loss: 0.678 | Test Acc: 85.000% (85/100) Test set: Average loss: 0.6360, Accuracy: 8334/10000 (83.34%) Epoch: 32 Loss: 0.109 | Acc: 96.875% (62/64) Loss: 0.147 | Acc: 94.972% (6139/6464) Loss: 0.147 | Acc: 94.970% (12217/12864) Loss: 0.150 | Acc: 94.840% (18270/19264) Loss: 0.149 | Acc: 94.931% (24363/25664) Loss: 0.149 | Acc: 94.932% (30439/32064) Loss: 0.151 | Acc: 94.858% (36486/38464) Loss: 0.149 | Acc: 94.885% (42569/44864) Test Loss: 0.316 | Test Acc: 91.000% (91/100) Test set: Average loss: 0.4303, Accuracy: 8682/10000 (86.82%) Epoch: 33 Loss: 0.068 | Acc: 100.000% (64/64) Loss: 0.139 | Acc: 95.467% (6171/6464) Loss: 0.136 | Acc: 95.460% (12280/12864) Loss: 0.135 | Acc: 95.479% (18393/19264) Loss: 0.136 | Acc: 95.500% (24509/25664) Loss: 0.135 | Acc: 95.422% (30596/32064) Loss: 0.138 | Acc: 95.328% (36667/38464) Loss: 0.137 | Acc: 95.341% (42774/44864) 9.2 csghmc (来源:小米笔记本AIR)时间原因没跑完

/home/ygf/miniforge3/envs/py27_csgmcmc/bin/python /home/ygf/deep_MCMC/csgmcmc/experiments/cifar_csghmc.py --dir ./model --data_path ./data ==> Preparing data.. Files already downloaded and verified Files already downloaded and verified ==> Building model.. use cuda ! Epoch: 0 Loss: 2.358 | Acc: 12.500% (8/64) Loss: 2.969 | Acc: 13.846% (895/6464) Loss: 2.521 | Acc: 17.133% (2204/12864) Loss: 2.346 | Acc: 19.347% (3727/19264) Loss: 2.240 | Acc: 21.291% (5464/25664) Loss: 2.164 | Acc: 22.973% (7366/32064) Loss: 2.103 | Acc: 24.600% (9462/38464) Loss: 2.048 | Acc: 26.139% (11727/44864) Test Loss: 2.492 | Test Acc: 28.000% (28/100) Test set: Average loss: 2.5745, Accuracy: 2192/10000 (21.92%) Epoch: 1 Loss: 2.228 | Acc: 25.000% (16/64) Loss: 1.610 | Acc: 39.975% (2584/6464) Loss: 1.583 | Acc: 41.294% (5312/12864) Loss: 1.565 | Acc: 42.115% (8113/19264) Loss: 1.547 | Acc: 42.842% (10995/25664) Loss: 1.525 | Acc: 43.731% (14022/32064) Loss: 1.505 | Acc: 44.535% (17130/38464) Loss: 1.481 | Acc: 45.531% (20427/44864) Test Loss: 1.714 | Test Acc: 40.000% (40/100) Test set: Average loss: 1.5870, Accuracy: 4587/10000 (45.87%) Epoch: 2 Loss: 1.348 | Acc: 50.000% (32/64) Loss: 1.275 | Acc: 54.131% (3499/6464) Loss: 1.268 | Acc: 54.384% (6996/12864) Loss: 1.242 | Acc: 55.461% (10684/19264) Loss: 1.217 | Acc: 56.363% (14465/25664) Loss: 1.198 | Acc: 57.126% (18317/32064) Loss: 1.182 | Acc: 57.792% (22229/38464) Loss: 1.167 | Acc: 58.252% (26134/44864) Test Loss: 1.325 | Test Acc: 54.000% (54/100) Test set: Average loss: 1.4081, Accuracy: 5348/10000 (53.48%) Epoch: 3 Loss: 1.253 | Acc: 56.250% (36/64) Loss: 1.015 | Acc: 63.660% (4115/6464) Loss: 1.016 | Acc: 64.210% (8260/12864) Loss: 0.994 | Acc: 64.976% (12517/19264) Loss: 0.982 | Acc: 65.403% (16785/25664) Loss: 0.969 | Acc: 65.803% (21099/32064) Loss: 0.955 | Acc: 66.202% (25464/38464) Loss: 0.944 | Acc: 66.621% (29889/44864) Test Loss: 1.069 | Test Acc: 64.000% (64/100) Test set: Average loss: 1.1429, Accuracy: 6263/10000 (62.63%) Epoch: 4 Loss: 1.236 | Acc: 62.500% (40/64) Loss: 0.821 | Acc: 71.504% (4622/6464) Loss: 0.813 | Acc: 71.789% (9235/12864) Loss: 0.807 | Acc: 72.072% (13884/19264) Loss: 0.797 | Acc: 72.198% (18529/25664) Loss: 0.793 | Acc: 72.430% (23224/32064) Loss: 0.783 | Acc: 72.777% (27993/38464) Loss: 0.774 | Acc: 73.119% (32804/44864) Test Loss: 1.113 | Test Acc: 62.000% (62/100) Test set: Average loss: 1.2412, Accuracy: 5995/10000 (59.95%) Epoch: 5 Loss: 1.099 | Acc: 67.188% (43/64) Loss: 0.693 | Acc: 75.990% (4912/6464) Loss: 0.688 | Acc: 76.011% (9778/12864) Loss: 0.682 | Acc: 76.334% (14705/19264) Loss: 0.680 | Acc: 76.422% (19613/25664) Loss: 0.676 | Acc: 76.503% (24530/32064) Loss: 0.671 | Acc: 76.633% (29476/38464) Loss: 0.669 | Acc: 76.779% (34446/44864) Test Loss: 1.214 | Test Acc: 58.000% (58/100) Test set: Average loss: 1.1211, Accuracy: 6441/10000 (64.41%) Epoch: 6 Loss: 0.807 | Acc: 68.750% (44/64) Loss: 0.618 | Acc: 78.543% (5077/6464) Loss: 0.615 | Acc: 78.568% (10107/12864) Loss: 0.611 | Acc: 78.691% (15159/19264) Loss: 0.606 | Acc: 78.803% (20224/25664) Loss: 0.605 | Acc: 78.958% (25317/32064) Loss: 0.605 | Acc: 78.947% (30366/38464) Loss: 0.602 | Acc: 79.119% (35496/44864) Test Loss: 1.030 | Test Acc: 62.000% (62/100) Test set: Average loss: 1.2698, Accuracy: 6301/10000 (63.01%) Epoch: 7 Loss: 0.703 | Acc: 73.438% (47/64) Loss: 0.545 | Acc: 81.002% (5236/6464) Loss: 0.562 | Acc: 80.550% (10362/12864) Loss: 0.557 | Acc: 80.845% (15574/19264) Loss: 0.559 | Acc: 80.720% (20716/25664) Loss: 0.555 | Acc: 80.857% (25926/32064) Loss: 0.558 | Acc: 80.772% (31068/38464) Loss: 0.559 | Acc: 80.777% (36240/44864) Test Loss: 0.558 | Test Acc: 79.000% (79/100) Test set: Average loss: 0.6320, Accuracy: 7887/10000 (78.87%) Epoch: 8 Loss: 0.607 | Acc: 81.250% (52/64) Loss: 0.536 | Acc: 81.699% (5281/6464) Loss: 0.524 | Acc: 82.058% (10556/12864) Loss: 0.521 | Acc: 82.382% (15870/19264) Loss: 0.524 | Acc: 82.212% (21099/25664) Loss: 0.523 | Acc: 82.108% (26327/32064) Loss: 0.527 | Acc: 81.916% (31508/38464) Loss: 0.527 | Acc: 81.986% (36782/44864) Test Loss: 1.428 | Test Acc: 58.000% (58/100) Test set: Average loss: 1.2453, Accuracy: 6638/10000 (66.38%) Epoch: 9 Loss: 0.614 | Acc: 82.812% (53/64) Loss: 0.492 | Acc: 83.215% (5379/6464) Loss: 0.498 | Acc: 82.851% (10658/12864) Loss: 0.508 | Acc: 82.569% (15906/19264) Loss: 0.505 | Acc: 82.602% (21199/25664) Loss: 0.503 | Acc: 82.747% (26532/32064) Loss: 0.507 | Acc: 82.618% (31778/38464) Loss: 0.504 | Acc: 82.748% (37124/44864) Test Loss: 2.370 | Test Acc: 50.000% (50/100) Test set: Average loss: 1.9772, Accuracy: 5289/10000 (52.89%) Epoch: 10 Loss: 0.669 | Acc: 75.000% (48/64) Loss: 0.485 | Acc: 83.060% (5369/6464) Loss: 0.471 | Acc: 83.808% (10781/12864) Loss: 0.481 | Acc: 83.550% (16095/19264) Loss: 0.481 | Acc: 83.416% (21408/25664) Loss: 0.479 | Acc: 83.520% (26780/32064) Loss: 0.480 | Acc: 83.533% (32130/38464) Loss: 0.480 | Acc: 83.452% (37440/44864) Test Loss: 0.938 | Test Acc: 70.000% (70/100) Test set: Average loss: 0.8622, Accuracy: 7310/10000 (73.10%) Epoch: 11 Loss: 0.535 | Acc: 81.250% (52/64) Loss: 0.461 | Acc: 84.158% (5440/6464) Loss: 0.466 | Acc: 84.064% (10814/12864) Loss: 0.463 | Acc: 84.152% (16211/19264) Loss: 0.468 | Acc: 83.942% (21543/25664) Loss: 0.471 | Acc: 83.820% (26876/32064) Loss: 0.471 | Acc: 83.808% (32236/38464) Loss: 0.469 | Acc: 83.896% (37639/44864) Test Loss: 0.895 | Test Acc: 73.000% (73/100) Test set: Average loss: 0.9639, Accuracy: 7161/10000 (71.61%) Epoch: 12 Loss: 0.651 | Acc: 75.000% (48/64) Loss: 0.459 | Acc: 84.112% (5437/6464) Loss: 0.450 | Acc: 84.771% (10905/12864) Loss: 0.450 | Acc: 84.790% (16334/19264) Loss: 0.447 | Acc: 84.858% (21778/25664) Loss: 0.449 | Acc: 84.787% (27186/32064) Loss: 0.448 | Acc: 84.890% (32652/38464) Loss: 0.447 | Acc: 84.879% (38080/44864) Test Loss: 0.946 | Test Acc: 72.000% (72/100) Test set: Average loss: 1.1126, Accuracy: 6712/10000 (67.12%) Epoch: 13 Loss: 0.692 | Acc: 73.438% (47/64) Loss: 0.426 | Acc: 85.473% (5525/6464) Loss: 0.430 | Acc: 85.331% (10977/12864) Loss: 0.428 | Acc: 85.278% (16428/19264) Loss: 0.430 | Acc: 85.162% (21856/25664) Loss: 0.427 | Acc: 85.336% (27362/32064) Loss: 0.427 | Acc: 85.389% (32844/38464) Loss: 0.425 | Acc: 85.405% (38316/44864) Test Loss: 1.140 | Test Acc: 64.000% (64/100) Test set: Average loss: 1.2493, Accuracy: 6454/10000 (64.54%) Epoch: 14 Loss: 0.647 | Acc: 75.000% (48/64) Loss: 0.442 | Acc: 84.839% (5484/6464) Loss: 0.430 | Acc: 85.246% (10966/12864) Loss: 0.426 | Acc: 85.444% (16460/19264) Loss: 0.423 | Acc: 85.493% (21941/25664) Loss: 0.418 | Acc: 85.594% (27445/32064) Loss: 0.418 | Acc: 85.649% (32944/38464) Loss: 0.417 | Acc: 85.603% (38405/44864) Test Loss: 3.071 | Test Acc: 51.000% (51/100) Test set: Average loss: 3.3240, Accuracy: 4738/10000 (47.38%) Epoch: 15 Loss: 1.045 | Acc: 67.188% (43/64) Loss: 0.407 | Acc: 86.185% (5571/6464) Loss: 0.403 | Acc: 86.023% (11066/12864) Loss: 0.407 | Acc: 85.891% (16546/19264) Loss: 0.401 | Acc: 86.086% (22093/25664) Loss: 0.399 | Acc: 86.215% (27644/32064) Loss: 0.401 | Acc: 86.138% (33132/38464) Loss: 0.402 | Acc: 86.131% (38642/44864) Test Loss: 1.214 | Test Acc: 66.000% (66/100) Test set: Average loss: 1.0818, Accuracy: 6922/10000 (69.22%) Epoch: 16 Loss: 0.589 | Acc: 82.812% (53/64) Loss: 0.384 | Acc: 86.618% (5599/6464) Loss: 0.383 | Acc: 86.839% (11171/12864) Loss: 0.380 | Acc: 87.012% (16762/19264) Loss: 0.384 | Acc: 86.818% (22281/25664) Loss: 0.383 | Acc: 86.773% (27823/32064) Loss: 0.384 | Acc: 86.827% (33397/38464) Loss: 0.384 | Acc: 86.827% (38954/44864) Test Loss: 0.654 | Test Acc: 80.000% (80/100) Test set: Average loss: 0.6737, Accuracy: 7881/10000 (78.81%) Epoch: 17 Loss: 0.377 | Acc: 87.500% (56/64) Loss: 0.361 | Acc: 87.407% (5650/6464) Loss: 0.371 | Acc: 87.096% (11204/12864) Loss: 0.367 | Acc: 87.194% (16797/19264) Loss: 0.368 | Acc: 87.247% (22391/25664) Loss: 0.365 | Acc: 87.494% (28054/32064) Loss: 0.365 | Acc: 87.484% (33650/38464) Loss: 0.365 | Acc: 87.482% (39248/44864) Test Loss: 0.562 | Test Acc: 84.000% (84/100) Test set: Average loss: 0.5070, Accuracy: 8373/10000 (83.73%) Epoch: 18 Loss: 0.552 | Acc: 78.125% (50/64) Loss: 0.357 | Acc: 88.026% (5690/6464) Loss: 0.350 | Acc: 88.324% (11362/12864) Loss: 0.354 | Acc: 88.050% (16962/19264) Loss: 0.352 | Acc: 88.131% (22618/25664) Loss: 0.354 | Acc: 88.021% (28223/32064) Loss: 0.355 | Acc: 87.939% (33825/38464) Loss: 0.357 | Acc: 87.874% (39424/44864) Test Loss: 0.481 | Test Acc: 84.000% (84/100) Test set: Average loss: 0.6533, Accuracy: 7988/10000 (79.88%) Epoch: 19 Loss: 0.318 | Acc: 89.062% (57/64) Loss: 0.333 | Acc: 88.428% (5716/6464) Loss: 0.336 | Acc: 88.324% (11362/12864) Loss: 0.337 | Acc: 88.434% (17036/19264) Loss: 0.338 | Acc: 88.505% (22714/25664) Loss: 0.342 | Acc: 88.392% (28342/32064) Loss: 0.341 | Acc: 88.420% (34010/38464) Loss: 0.344 | Acc: 88.367% (39645/44864) Test Loss: 0.789 | Test Acc: 76.000% (76/100) Test set: Average loss: 0.8728, Accuracy: 7404/10000 (74.04%) Epoch: 20 Loss: 0.335 | Acc: 89.062% (57/64) Loss: 0.317 | Acc: 89.295% (5772/6464) Loss: 0.320 | Acc: 88.985% (11447/12864) Loss: 0.329 | Acc: 88.678% (17083/19264) Loss: 0.327 | Acc: 88.759% (22779/25664) Loss: 0.331 | Acc: 88.585% (28404/32064) Loss: 0.332 | Acc: 88.576% (34070/38464) Loss: 0.330 | Acc: 88.675% (39783/44864) Test Loss: 1.352 | Test Acc: 62.000% (62/100) Test set: Average loss: 1.3439, Accuracy: 6225/10000 (62.25%) Epoch: 21 Loss: 0.590 | Acc: 76.562% (49/64) Loss: 0.322 | Acc: 89.186% (5765/6464) Loss: 0.318 | Acc: 89.164% (11470/12864) Loss: 0.317 | Acc: 89.104% (17165/19264) Loss: 0.321 | Acc: 88.988% (22838/25664) Loss: 0.317 | Acc: 89.100% (28569/32064) Loss: 0.318 | Acc: 89.073% (34261/38464) Loss: 0.318 | Acc: 89.027% (39941/44864) Test Loss: 1.471 | Test Acc: 55.000% (55/100) Test set: Average loss: 1.3264, Accuracy: 6469/10000 (64.69%) Epoch: 22 Loss: 0.533 | Acc: 84.375% (54/64) Loss: 0.310 | Acc: 89.387% (5778/6464) Loss: 0.309 | Acc: 89.451% (11507/12864) Loss: 0.312 | Acc: 89.332% (17209/19264) Loss: 0.309 | Acc: 89.472% (22962/25664) Loss: 0.309 | Acc: 89.459% (28684/32064) Loss: 0.307 | Acc: 89.567% (34451/38464) Loss: 0.307 | Acc: 89.575% (40187/44864) Test Loss: 2.208 | Test Acc: 59.000% (59/100) Test set: Average loss: 1.6997, Accuracy: 6088/10000 (60.88%) Epoch: 23 Loss: 0.408 | Acc: 87.500% (56/64) Loss: 0.281 | Acc: 90.145% (5827/6464) Loss: 0.286 | Acc: 90.299% (11616/12864) Loss: 0.288 | Acc: 90.210% (17378/19264) Loss: 0.292 | Acc: 89.963% (23088/25664) Loss: 0.295 | Acc: 89.820% (28800/32064) Loss: 0.292 | Acc: 89.939% (34594/38464) Loss: 0.294 | Acc: 89.814% (40294/44864) Test Loss: 0.689 | Test Acc: 74.000% (74/100) Test set: Average loss: 0.7813, Accuracy: 7586/10000 (75.86%) Epoch: 24 Loss: 0.389 | Acc: 87.500% (56/64) Loss: 0.266 | Acc: 90.919% (5877/6464) Loss: 0.280 | Acc: 90.400% (11629/12864) Loss: 0.279 | Acc: 90.454% (17425/19264) Loss: 0.279 | Acc: 90.489% (23223/25664) Loss: 0.283 | Acc: 90.351% (28970/32064) Loss: 0.283 | Acc: 90.394% (34769/38464) Loss: 0.284 | Acc: 90.364% (40541/44864) Test Loss: 0.883 | Test Acc: 74.000% (74/100) Test set: Average loss: 0.9419, Accuracy: 7293/10000 (72.93%) Epoch: 25 Loss: 0.313 | Acc: 92.188% (59/64) Loss: 0.257 | Acc: 91.136% (5891/6464) Loss: 0.268 | Acc: 90.757% (11675/12864) Loss: 0.265 | Acc: 91.051% (17540/19264) Loss: 0.259 | Acc: 91.217% (23410/25664) Loss: 0.258 | Acc: 91.258% (29261/32064) Loss: 0.260 | Acc: 91.155% (35062/38464) Loss: 0.263 | Acc: 91.046% (40847/44864) Test Loss: 0.707 | Test Acc: 76.000% (76/100) Test set: Average loss: 0.7634, Accuracy: 7739/10000 (77.39%) Epoch: 26 Loss: 0.236 | Acc: 92.188% (59/64) Loss: 0.251 | Acc: 91.368% (5906/6464) Loss: 0.243 | Acc: 91.706% (11797/12864) Loss: 0.240 | Acc: 91.746% (17674/19264) Loss: 0.245 | Acc: 91.529% (23490/25664) Loss: 0.247 | Acc: 91.458% (29325/32064) Loss: 0.250 | Acc: 91.408% (35159/38464) Loss: 0.248 | Acc: 91.528% (41063/44864) Test Loss: 0.508 | Test Acc: 84.000% (84/100) Test set: Average loss: 0.5389, Accuracy: 8354/10000 (83.54%) Epoch: 27 Loss: 0.280 | Acc: 89.062% (57/64) Loss: 0.228 | Acc: 92.389% (5972/6464) Loss: 0.231 | Acc: 92.257% (11868/12864) Loss: 0.240 | Acc: 91.990% (17721/19264) Loss: 0.239 | Acc: 92.039% (23621/25664) Loss: 0.240 | Acc: 91.972% (29490/32064) Loss: 0.241 | Acc: 91.881% (35341/38464) Loss: 0.242 | Acc: 91.844% (41205/44864) Test Loss: 0.462 | Test Acc: 86.000% (86/100) Test set: Average loss: 0.5628, Accuracy: 8320/10000 (83.20%) Epoch: 28 Loss: 0.393 | Acc: 89.062% (57/64) Loss: 0.224 | Acc: 92.373% (5971/6464) Loss: 0.221 | Acc: 92.506% (11900/12864) Loss: 0.218 | Acc: 92.535% (17826/19264) Loss: 0.223 | Acc: 92.429% (23721/25664) Loss: 0.222 | Acc: 92.437% (29639/32064) Loss: 0.221 | Acc: 92.479% (35571/38464) Loss: 0.219 | Acc: 92.529% (41512/44864) Test Loss: 0.501 | Test Acc: 82.000% (82/100) Test set: Average loss: 0.6946, Accuracy: 8014/10000 (80.14%) Epoch: 29 Loss: 0.363 | Acc: 87.500% (56/64) Loss: 0.207 | Acc: 92.822% (6000/6464) Loss: 0.208 | Acc: 92.856% (11945/12864) Loss: 0.206 | Acc: 92.940% (17904/19264) Loss: 0.206 | Acc: 92.971% (23860/25664) Loss: 0.209 | Acc: 92.839% (29768/32064) Loss: 0.209 | Acc: 92.853% (35715/38464) Loss: 0.210 | Acc: 92.807% (41637/44864) Test Loss: 0.510 | Test Acc: 83.000% (83/100) Test set: Average loss: 0.4381, Accuracy: 8613/10000 (86.13%) Epoch: 30 Loss: 0.307 | Acc: 92.188% (59/64) Loss: 0.188 | Acc: 93.704% (6057/6464)

版权声明:本文内容由互联网用户自发贡献,该文观点仅代表作者本人。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。如发现本站有涉嫌侵权/违法违规的内容,请联系我们,一经查实,本站将立刻删除。

如需转载请保留出处:https://51itzy.com/kjqy/16149.html